Prompt engineering for language teachers is simpler than it sounds. ChatGPT, Claude, and Gemini all work better when you give them clear, specific instructions and this chapter covers the five principles that make the difference: specificity, context, examples, format, and iteration. Follow them and you'll get usable language exercises, reading texts, and lesson plans on the first try, not the fifth.

|

What this chapter covers

|

Why Do Some Prompts Work Better Than Others?

Specific prompts produce better output because they narrow the model's search space. The more context you give, the less the AI has to guess. Large language models (like ChatGPT, Claude, and Gemini) are trained on enormous amounts of text. During the training, they form connections between words, phrases and concepts that tend to appear together. When you give the model a prompt, it uses those connections to predict what text should come next.

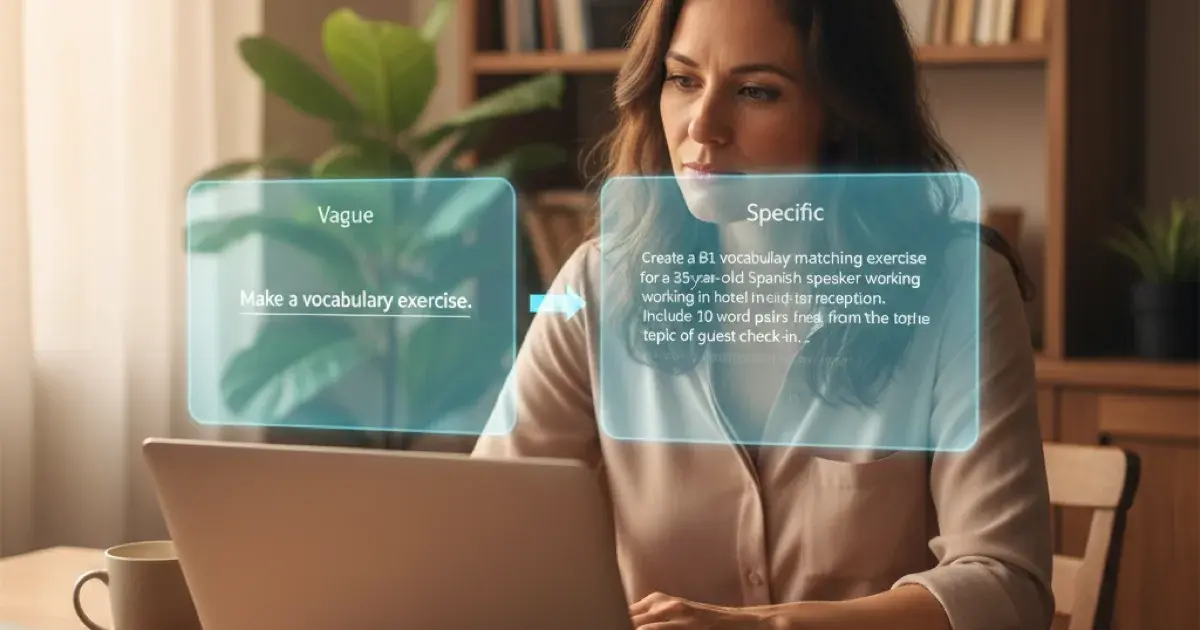

When you write "create a vocabulary exercise," the model has seen that phrase in thousands of different contexts, from children's workbooks to university linguistics papers to corporate training manuals. It doesn't know which context you mean, so it picks from all of them. The result is generic, and probably not what you need.

When you write "create a B1 vocabulary matching exercise for an adult ESL learner about hotel vocabulary," you narrow the space the model draws from, or prime it to consider text related to those terms and concepts. "B1" steers it toward CEFR-aligned teaching materials. "ESL" steers it toward English as a Second Language resources. "Hotel vocabulary" narrows the topic. The model is now drawing from a much more relevant part of its training, and the output reflects that.

What Are the Five Principles of Effective AI Prompts?

The five principles are: be specific, provide context, show what you want, specify the format, and iterate. The principles below are all practical ways to prime and work with the model toward producing what you actually need.

1. Be specific

The more context you put into your prompt, the more you narrow what the model produces. Generic input produces generic output.

Vague prompt:

Make me a vocabulary exercise.

Specific prompt:

Create a vocabulary matching exercise (word to definition) with 10 items about hotel and travel vocabulary for an adult ESL learner at B1 level. Include an answer key.

The second prompt tells the AI: what type of exercise, how many items, what topic, who the learner is, what level, and what format to include. Each detail steers the model closer to what you actually need.

|

💡 Use the words your profession uses The terms that are common in language teaching also tend to appear in the AI's training data alongside relevant teaching content. So using them helps steer the output further towards what you probably want. Instead of "English learner," try "ESL learner" (or a similar term for the language you teach). Similarly, "B1 level" is more useful than "intermediate" because CEFR levels have specific expectations attached to them in the teaching literature the model has seen. While the word "English" probably appear in loads of texts in the models training data, including fiction, the more technical terms probably appear in less irrelevant materials. Also, in some relevant materials perhaps only the technical term appears. Watch out for abbreviations and terms that are ambiguous, especially with short prompts. If you don't get what you expect try to be "redundant" in the language like "for an adult ESL learner learning English at B1 level". The extra words cost you little and help the model land in the right context. |

2. Provide context

Provide enough context for the model to steer it in the desired direction. For instance, tell the AI who the learner is. This narrows the output from "generic exercise" to "exercise that fits this person." Level, native language, profession, interests, learning goals: each detail you add gives the model more to work with.

Example prompt:

My student is an adult professional, a software engineer from South Korea, learning English at B1 level. She needs to improve her ability to participate in team meetings and give short presentations. Create 8 vocabulary items related to giving opinions and agreeing/disagreeing in meetings, with example sentences showing each word in a professional context.

Notice how "software engineer" steers toward tech workplace language, "South Korea" could influence cultural context, and "team meetings and presentations" narrows it from all of Business English to specific situations she'll actually encounter. You don't need to write a biography. But every relevant detail you include may mean less editing afterwards.

3. Show what you want

If you've ever shown a student an example of what a good essay looks like before asking them to write one, you already understand this principle. Providing an example of the output you're looking for, even a partial one, is a powerful technique for getting consistent, usable results. The model uses your example to understand the format, the tone, the level of detail, and the style you expect. The technical term is "few-shot prompting," and we'll cover it in more depth in the advanced chapter. For now, just know that showing can beat telling.

Example prompt:

Create 5 vocabulary items in this format:

Word: affordable

Definition: not expensive; reasonably priced

Example: "The restaurant is popular because the food is delicious and affordable."

Synonym: reasonably priced, budget-friendly

Now create 5 more items about apartment vocabulary for a B1 learner looking for housing.

By providing one complete example, you've told the AI exactly how you want each item structured. The format, the level of detail in the definition, the style of the example sentence, the inclusion of synonyms. All of it is communicated through the example rather than through instructions.

4. Specify the format

Tell the AI exactly how to structure the output. How many items? Should there be an answer key? A teacher version and a learner version? Should it be a table, a list, or a paragraph?

Many common exercise formats already carry implicit formatting. If you ask for "a fill-in-the-blank exercise," the model will likely produce sentences with blanks or underscores. Ask for "a dialog script" and you'll probably get something with speaker labels. These terms prime the model toward familiar formats because it has seen thousands of examples of each. Sometimes that's enough.

You need to be more specific when the default doesn't match what you need:

Brief example:

Create a fill-in-the-blank exercise about present perfect.

Detailed example:

Create a fill-in-the-blank exercise about present perfect with 8 sentences. Use a context of travel experiences. Format as:

- Learner version: numbered sentences with blanks

- Teacher version: the same sentences with correct answers in bold and brief notes on common errors

The first version will produce a usable exercise most of the time. The second version ensures you get separate teacher and learner versions with the structure you want.

5. Iterate, don't settle

If the output is not what you want, don't settle. If it is a small correction you might do it manually, but it's completely normal to ask the AI to adjust, refine or redo parts of what it produced.

Example prompts

"Make the sentences shorter, this is for A2 level."

"The vocabulary is too easy. Push it up to B2."

"Replace items 3 and 7 with words more relevant to healthcare."

"Add an answer key."

"Actually, make this a multiple-choice exercise instead."

Each of these is a normal part of the workflow. You're not failing if the first output isn't perfect. You're using the tool correctly. And each refinement instruction further steers the model toward what you need.

|

💡 Don't fight the AI, work with it AI output will sometimes be wrong. It will sometimes be mediocre. It will sometimes miss the mark entirely. This is normal, and it's worth being honest about it. Try not to thing about wether the output is perfect, but wether it saved you time overall. If correcting an AI draft takes 10 minutes but creating the same material from scratch would have taken 45, you still came out well ahead. If the AI produced something you can't use at all, try refining your prompt before giving up. If one or two adjustments to your instructions fixe the problem, you might still be ahead. Over time, you'll develop a sense for which tasks AI handles well, which requires more refinement or manual adjustments, and which ones aren't worth the trouble. That intuition is valuable, but you can only build it by experimenting, not unlike language progression. |

What Does a Great Prompt Look Like?

A great prompt gives information about the learner (e.g. level, language, profession), specifies the exact format (e.g. length, structure, number of items), and shows a sample of what you want. Let's see this and the principles in action. Watch how the same basic request transforms as we add specificity.

Bad: vague, no context

Make a reading exercise.

This could produce literally anything. A C2 academic text. A children's story. An exercise with no questions. The model has to guess everything, and it will most likely guess wrong.

Good: adds context and format

Create a short reading text (150-200 words) about a first day at a new job, suitable for an adult ESL learner at B1 level. Include 5 comprehension questions.

Much better. The AI now knows the topic, length, audience, level, and format. This will produce something usable most of the time.

Great: adds learner profile, format detail, and shows what you want

My student is a 35-year-old Brazilian software developer learning English at B1 level. He recently changed jobs and needs English for his daily work.

Create a short reading text (150-200 words) about a first day at a new tech company. The text should use simple past and present perfect (structures we're currently working on).

Include:

1. 3 true/false/not stated questions

2. 5 vocabulary items from the text with definitions

3. 1 discussion question connecting the text to the student's own experience

Format the vocabulary items like this:

onboarding (noun): the process of introducing a new employee to a company

"The onboarding process included setting up my laptop and meeting the team."

Every detail steers the model closer to what you actually need. That said, don't feel you always need to write the "great" version. The model already knows a lot from the terms you use. "B1 gap-fill exercise on present perfect" carries a surprising amount of implicit information, because the model has seen thousands of such exercises in its training data. Start with a level of detail that feels natural, see what the model produces, and add more specificity if the output isn't right. Some AI tools also learn your preferences over time, so prompts that needed a lot of detail at first may need less as you build a history with the tool.

Which Prompting Framework Should You Use? (PACO, TATTOO, Cambridge, ?)

The short answer is any of them: PACO, TATTOO, or Cambridge's 7 Ingredients all capture the same core idea. The underlying principles matter more than the framework name. Several people have created scaffolding acronyms to help remember prompting principles. They all capture similar ideas in different packaging. Here are three you might encounter:

-

PACO. Persona, Action, Context, Output. Tell the AI who it is, what to do, the context, and what format you want.

-

TATTOO. An ESL-specific framework: Task, Actor, Target language, Topic, Output, Other details.

-

Cambridge's 7 Ingredients. A detailed framework from Cambridge University Press: task, role, audience, purpose, format, constraints, example.

These frameworks all point to the same underlying idea we've covered: give the model enough context to prime it for the output you actually need. Try whichever resonates with you, or ignore them all and just remember: be specific, provide context, show what you want, specify the format, and iterate. The frameworks are scaffolding; the principles are what matter. You'll see the principles in action and more concrete prompts in the second part of this guide, starting in chapter 5 on vocabulary, where we build full exercises from scratch.

Next: Translanguaging with AI

Curious about how your learners' mother tongue can actually help them learn faster? The next chapter covers translanguaging with AI, a practical technique for using the languages your learners already know.